Everything must be high-value added work. But.

I used to have very long sessions of focused, uninterrupted “work”. Programming mostly, but not necessarily, I have always thought that with enough time and effort I can get passionate about (almost) anything, and it happened many times; I still happen to have quite deep knowledge about some topics for absolutely no apparent reason out of pure curiosity or self-induced rabbit hole state.

I remember early morning becoming afternoons and early nights with the least amount of breaks for physiological needs.

My entire setup is still meant for and built around that meditative state, and keeps evolving upon these roots. (N)Vim, tmux, carefully tweaked keymaps and configurations, (recently introduced) split keyboard layers. Everything is in place for a specific reason: delegate as much as possible to the ancestral parts of my brain dedicated to muscle memory and coordination so I can focus on the singular thing I’m doing.

But something is dramatically changing, everywhere. And despite part of it is self-induced, most of it is more and more structural in how we work and socially evolving.

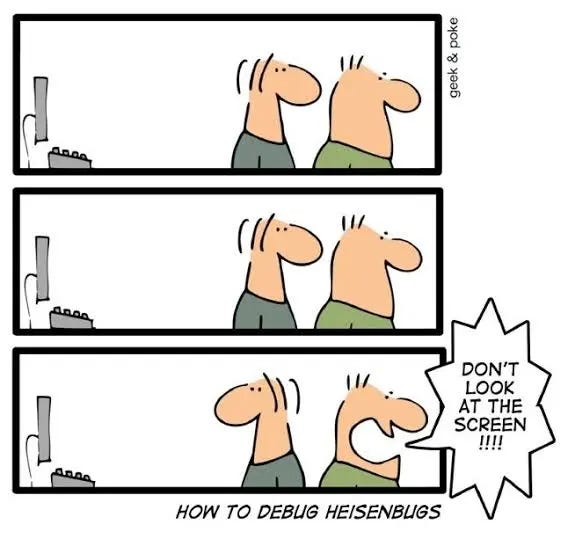

I remember how my work, and my free time, was flowing in that sense. I had waves of focus, creativity, chaos, concern, release; all of these emotional states work well together in a work session when they dynamically balance each other to produce an artifact, a thought, a brilliant new assumption you were previously ignoring, or whatever else. Truly mastering these emotions is also part of the ability of a skilled worker, in any field. Mastering fear and worry for a professional climber IS part of their skills. Mastering the stress coming from a Heisenbug isn’t different, the more mature you are as a software engineer, the more you know how to deal with your time, including breaks and, in general, the rest of your work and life to sustain it long-term.

I’ve recently started questioning if the way we’ve been working since the most powerful AI models came out is compatible with the standard working models. I’m not just talking about software engineering, it wouldn’t make much sense. Our profession, especially now, is getting closer and closer to other knowledge worker who solve problem at a higher level and their work is getting impacted in a similar fashion.

I have myself noticed with surprise how much of the low added-value work I got rid of. And I’m happy about it when it is really low-value; for the same reason I love vim macros: there’s no reason you should manually format in the same way one thousands lines of text if you can automate it.

What is happening here is different though, these are roughly the stages we’ve seen (so far). I’ll be using software but other fields can relate:

1. Write your code, AI is going to help you autocompleting a few things, boilerplating and suggesting additions or changes.

2. Write most of your code, take the critical parts of it and ask AI in the browser for suggestions, tests, validation and brainstorm with it. You can brainstorm with AI at any point of the flow, before, during, after.

3. Chat in your editor of choice with AI, it produce part/most of the code, depending on your trust and confidence. You (probably) review it and accept/discard the changes.

4. Spin an agent that does most of the work for you, you follow along (maybe), review and accept/discard/iterate over it.

5. Loop an agent over specifications of what you need, leave it more or less privileges to do anything it needs, depending on how much you segregated the environment or you trust humanity and their ability to prompt-inject you out of existence.

6. Orchestrate swarms of skilled agents to do the work you asked them to do, pretending you review the code. Ship it straight to production, your company is happy until they get hacked because of the many critical vulnerabilities “you” have introduced.

While all of this is happening in the background, you answer people on slack (or configure an agent to prepare and send you reports with summaries and answer for you to the less critical ones), skim-read a couple of important document you want to have an idea about, support a colleague with a critical bug on the pipelines, and do a back-and-forth on the Gemini and Claude browser sessions about the new project you’re leading for the next quarter.

“All and only the most high value-added work will remain for you” they said.

The problem is that the ups and (forced) downs I used to have were vital, especially the downs, the moment you relax some parts of the brain and focus yourself in creating your craft, in forming an idea, in shaping a diagram. They were vital for my brain to better process information, to give it space to clean up garbage and to be creative incidentally.

It wasn’t vital for my mental health for the sake of it: who cares? You should care for sure, but companies don’t usually work that way. Mental health is a concern for them if the byproducts and outcomes of a degrading worker’s creativity produces measurable damage, especially if the worker is hard or expensive to replace. Frameworks, procedures, anti-silos initiatives, bus-factor reducing policies are in place just for that.

Companies, in my opinion, should selfishly start thinking about this. When technology increases productivity through increasing the output it is beneficial for everyone IF AND ONLY IF the output is deterministically stable and its verification is either trivial or increases less than the output itself.

But this is not happening. The fundamentally, and by design, indeterministic nature of AI models is clearly what’s needed for them to be brilliant and able to do incredible things fast. But it’s also the condemn they gifted us along with it. We (still) don’t have the privilege to have huge output with minimal verification. Automated tests are not guardrails, if tests are all written by AI. LLM-as-a-Judge is not a guardrail as it brings with it non-determinism as a feature.

I think we’ll soon need to interrogate ourselves, as individuals first, and society later. What’s the trade-off we want to accept: low quality with high output or high quality, less output and more sustainability work?

My 2 cents on this.

I think the core issue lies in treating 'modern' output through the lens of 'traditional' workflows.

Back in the 90s or early 2000s, when the industry was dominated by OS-level code and kernel-heavy engineering, testing had a very specific, rigid DNA. Then Web Dev arrived, and we realised that treating a web app like an OS-based system was a mistake; an API fails for reasons and in ways that a system call simply doesn't.

We are at a similar crossroads with LLMs. Whether we are using them to write code or building products on top of them, we have to accept that the entire workflow and, by extension, our effort and our Jira tickets, needs to be recalibrated.

Even the Definition of Done (DoD) has to evolve. We’re moving away from the classic "API X is in prod with Y% test coverage and docs" toward a framework based on confidence levels. This is a necessary shift because we are no longer operating in an "IF AND ONLY IF deterministic environment".

If companies actually embrace this paradigm shift, throughput might stay higher than the old baseline, but "high productivity" won't look anything like the metrics we’re using today.